Finger Tables and O(log N) Routing: Chord’s Magic

Part 2b of the Torus DHT Blog Series

In the previous post, we covered how Chord organizes nodes and keys on a ring using consistent hashing. We understand the storage model—who owns which keys.

Now comes the critical question: how do you find a key?

This is where Chord goes from “interesting idea” to “holy shit, that’s clever.” The finger table mechanism is one of those rare algorithmic insights that seems obvious in hindsight but was genuinely novel when first published.

Let me walk you through it.

The Naive Approach: Why O(N) is Unacceptable

The simplest approach: ask every node in sequence.

Node A wants to find the key with hash value 42. It starts asking:

- Node B: “Do you store key 42?” → No

- Node C: “Do you store key 42?” → No

- Node D: “Do you store key 42?” → No

- …keep going until you find it

Complexity: O(N) where N is the number of nodes.

In a tiny network of 10 nodes, this is fine. But remember BitTorrent’s DHT? Tens of millions of concurrent nodes. Checking millions of nodes for every lookup is absolutely unacceptable.

Even in a modest 1,000-node network, O(N) lookups would be painfully slow. At scale, it’s a non-starter.

We need something way better.

The Insight: Exponential Shortcuts

Here’s the key insight that makes Chord work:

What if each node maintained shortcuts to nodes at exponentially increasing distances around the ring?

Instead of only knowing your immediate neighbor, you know nodes that are:

- 1 position away

- 2 positions away

- 4 positions away

- 8 positions away

- 16 positions away

- 32 positions away

- …and so on

These shortcuts are called fingers, and collectively they form your finger table.

The beauty: with each hop, you can skip exponentially larger portions of the ring. This is like binary search on a circular array.

The Finger Table Structure

Each node maintains an m-entry finger table, where m is the number of bits in the identifier space. For Chord’s 160-bit IDs, that’s 160 entries.

The i-th finger table entry for node n contains:

- start:

(n + 2^(i-1)) mod 2^m - successor: the first node that succeeds the start value

In plain English: the i-th finger points to the first node that is at least 2^(i-1) positions ahead of you on the ring.

Concrete Example

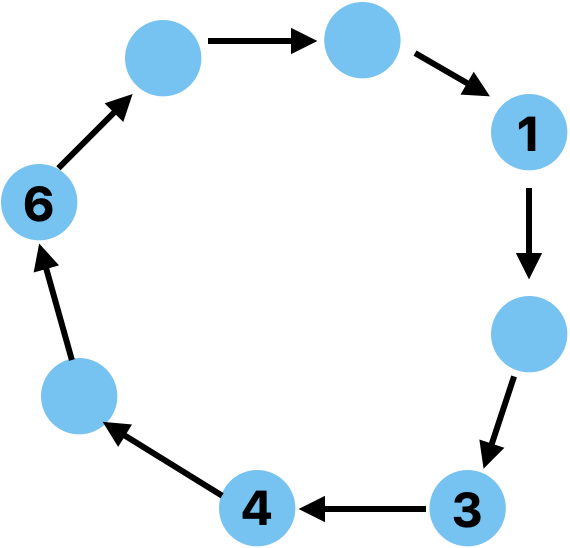

Let’s build finger tables for our 4-node ring (3-bit space, positions 0-7):

Nodes: 1, 3, 4, 6

Node 1’s finger table:

| i | Start (1 + 2^(i-1)) | Interval | Successor |

|---|---|---|---|

| 1 | 2 | [2, 3) | Node 3 |

| 2 | 3 | [3, 5) | Node 3 |

| 3 | 5 | [5, 1) | Node 6 |

Node 3’s finger table:

| i | Start (3 + 2^(i-1)) | Interval | Successor |

|---|---|---|---|

| 1 | 4 | [4, 5) | Node 4 |

| 2 | 5 | [5, 7) | Node 6 |

| 3 | 7 | [7, 3) | Node 1 |

Node 4’s finger table:

| i | Start (4 + 2^(i-1)) | Interval | Successor |

|---|---|---|---|

| 1 | 5 | [5, 6) | Node 6 |

| 2 | 6 | [6, 0) | Node 6 |

| 3 | 0 | [0, 4) | Node 1 |

See the pattern?

- Finger 1 points close by (2^0 = 1 position ahead)

- Finger 2 points a bit further (2^1 = 2 positions ahead)

- Finger 3 points halfway around the ring (2^2 = 4 positions ahead)

In a full 160-bit space, finger 160 would point nearly to the opposite side of the ring - 2^159 positions away.

The Routing Algorithm

Now that each node has a finger table, finding a key becomes elegant:

To find the successor of key k from node n:

if k is between n and n's immediate successor:

return n's successor (we found it!)

else:

find the node in n's finger table that most closely precedes k

ask that node to continue the search

That’s it. Two cases, simple logic.

The key insight: each hop covers approximately half the remaining distance to the target. Just like binary search.

Worked Example: Finding a Key

Let’s trace a lookup. Node 1 wants to find the successor of key 0.

Step 1: Node 1 checks

- Is key 0 between node 1 and its immediate successor (node 3)?

- No, because we move clockwise: 1 → 2 → 3. Key 0 is not in range [1, 3].

- Check finger table: which finger most closely precedes key 0?

- Finger 3 points to node 6. That’s the closest predecessor of key 0.

- Forward query to node 6

Step 2: Node 6 checks

- Is key 0 between node 6 and its successor (node 1)?

- Yes! Moving clockwise: 6 → 7 → 0 → 1. Key 0 falls in range (6, 1].

- Return node 1 as the successor

Result: 2 hops to find the answer.

Let’s try another. Node 1 wants to find key 5.

Step 1: Node 1 checks

- Is key 5 between node 1 and node 3? No.

- Closest preceding finger? Finger 3 points to node 6.

- Forward to node 6

Step 2: Node 6 checks

- Is key 5 between node 6 and its successor (node 1)?

- No. Range is (6, 1], but key 5 is at position 5.

- Closest preceding finger to key 5: looking at node 6’s table, finger 2 points to node 6 itself (no closer node).

- So this means key 5 is in node 6’s own range!

- Return node 6

Result: 2 hops.

(This example shows a subtle point: sometimes the finger table points to yourself, which means you’re the answer.)

Why This is O(log N)

Here’s the mathematical intuition:

- The ring has 2^m possible positions (m = 160 in real Chord)

- Each node has m fingers, pointing at exponential intervals

- Each hop reduces the remaining distance by roughly half

- After m hops maximum, you’ve covered the entire ring

Since m = log₂(2^m), and in a well-populated ring with N nodes, the identifier space is roughly 2^m ≈ N, we get O(log N) hops.

Practically:

The average path length is about (1/2) × log₂(N) because each hop typically halves the remaining distance, and roughly half the bits in the binary representation of the distance are already zeros (requiring no correction).

- Network with 1,000 nodes (≈ 2^10): ~5 hops average

- Network with 1,000,000 nodes (≈ 2^20): ~10 hops average

- Network with 1,000,000,000 nodes (≈ 2^30): ~15 hops average

The worst case is log₂(N) hops, but you’ll rarely hit it.

That’s the power of exponential finger spacing. It scales logarithmically, which means it scales beautifully.

BitTorrent’s DHT serves 15-25 million concurrent users, and ~12-15 hops is completely acceptable for peer discovery.

Maintaining Finger Tables: The fix_fingers Protocol

Here’s a practical problem: when nodes join or leave, finger tables become stale.

If node 2 joins between nodes 1 and 3, node 1’s finger 1 should probably point to node 2 instead of node 3. But how does node 1 learn about node 2?

Chord uses a background protocol called fix_fingers that runs periodically (just like stabilization).

The algorithm:

Every node, periodically:

Pick a random finger index i

Recalculate what finger[i].start should be

Find the current successor of that start value

Update finger[i] to point to that successor

By randomly updating one finger at a time, over multiple iterations, the entire finger table gradually converges to correctness.

Why random instead of sequential? To avoid synchronization issues where all nodes are updating the same finger simultaneously, potentially causing load spikes.

In Torus, I update fingers more aggressively during stabilization, but the principle is the same: periodic, incremental updates that eventually converge.

Fault Tolerance: Successor Lists

Remember from the previous post: nodes crash. Networks partition. Failures are the norm, not the exception.

Chord handles this with successor lists. Instead of maintaining just one successor, each node maintains a list of its r immediate successors.

The original Chord paper recommends r = O(log N). Torus uses r = 8, which provides good fault tolerance for medium-sized networks.

How It Works

If your immediate successor fails:

- Try the next node in your successor list

- If that fails too, try the next one

- Keep trying until you find a live successor

With proper tuning, the probability of the ring becoming partitioned (unable to route queries) is vanishingly small even with high failure rates.

The math: With r = 2 log₂ N successors, where nodes fail independently, you can lose roughly N^(1/2) nodes simultaneously and still maintain connectivity with high probability.

That’s remarkable resilience for a decentralized system.

Replication Strategy

Successor lists also provide a natural replication mechanism:

Store each key not just at its successor, but at the next r successors as well.

Now if a node crashes, its keys aren’t lost, they’re replicated at the next nodes in the ring. When the failure is detected:

- The replica at the next successor becomes the primary

- A new replica is created further down the successor list

- The system continues operating

This is how Torus implements replication (with r=3 for a 3x replication factor). It’s elegant because it leverages existing successor list machinery, no separate replication protocol needed.

Comparing Chord to Other DHTs

Quick comparison with the other major DHT designs:

Chord:

- Ring topology, finger tables

- O(log N) routing, O(log N) state per node

- Simplest to understand and implement

- Stabilization is straightforward but can be slow

Kademlia (BitTorrent, IPFS):

- XOR distance metric, k-buckets

- O(log N) routing, O(log N) state per node

- Parallel lookups (queries multiple nodes simultaneously)

- More complex but often faster in practice

Pastry:

- Prefix-based routing, routing tables + leaf sets

- O(log N) routing, O(log N) state per node

- Proximity-aware (routes through low-latency paths)

- Most complex, but sophisticated locality properties

For learning DHTs? Chord is the best choice. You can actually understand it completely, from first principles.

For production at BitTorrent’s scale? Kademlia’s parallel lookups win.

For latency-sensitive applications? Pastry’s proximity routing helps.

There’s no universal “best”, only the right tool for your use case.

The Implementation Gap

Reading about Chord and understanding it: Few days Implementing Chord correctly: several weeks.

Here are some real challenges I hit when building Torus:

Wraparound arithmetic: The identifier space wraps at 2^m. In a 3-bit space (0-7), is key 2 between node 6 and node 4?(Yes! Key 2 falls in the interval (6, 4] when wrapping through 0). Get this wrong and keys route to incorrect nodes.

Concurrent finger table updates: Multiple goroutines updating the finger table simultaneously. One missing mutex and you’ve got corrupted pointers.

Network timeouts: How long to wait for a response before assuming a node is dead? Too short: false positives. Too long: slow lookups.

Bootstrapping: The very first node in the ring is its own successor and predecessor. Handle that wrong and the second node can’t join.

These aren’t exotic edge cases, they’re everyday realities of distributed systems.

What We’ve Covered

Let’s recap Part 2a and 2b together:

Part 2a: The Ring and Consistent Hashing

- Why circles provide total order

- 160-bit identifier space and uniform distribution

- Consistent hashing minimizes data movement (K/N keys on average)

- Join/leave operations are local events

Part 2b: Finger Tables and Routing

- Exponential shortcuts enable O(log N) routing

- Finger table structure with m=160 entries

- Routing algorithm: binary search on a ring

- fix_fingers maintains correctness

- Successor lists provide fault tolerance and replication

This is the complete Chord protocol at a conceptual level. You now understand both how keys are stored (the ring) and how keys are found (finger tables).

What’s Next

In Part 3, we’ll shift from theory to practice: Building Torus - Architecture Decisions and Trade-offs.

We’ll cover:

- Why Go for the backend (goroutines, channels, built-in concurrency)

- gRPC vs REST vs raw TCP for inter-node communication

- The frontend decision: Next.js, React, and D3.js visualization

- The most important architectural choice: making the dashboard read-only

And I’ll show you actual code from Torus — real Go implementations of finger tables, stabilization, and routing.

If you’ve made it through both parts of this Chord deep-dive, you understand the protocol conceptually. Now let’s see what it takes to make it real.

Further Reading:

- Chord: A Scalable Peer-to-peer Lookup Service for Internet Applications - Read this after these posts for deeper understanding

- How to Make Chord Correct - Pamela Zave’s rigorous analysis of edge cases

- Comparing DHT Designs Under Churn - Academic performance comparison

- Kademlia: A Peer-to-peer Information System Based on the XOR Metric - The other major DHT design

Comments